A short while ago, WhatsApp launched a feature that gives the user the ability to create his own stickers using artificial intelligence. The sticker is designed based on the description he enters, and this feature is currently only available in limited countries.

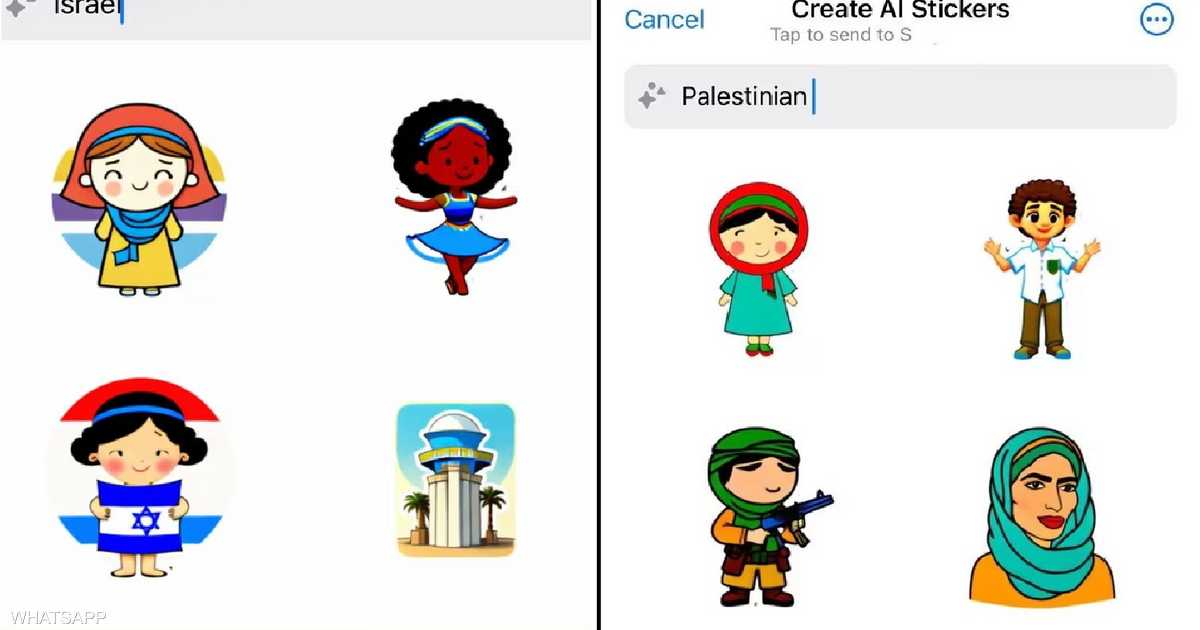

According to the British newspaper The Guardian, this feature displays an image of a gun or a boy carrying a gun when the term “Palestinian,” “Palestine,” or “Palestinian Muslim boy” is entered in the search box.

On the other hand, the artificial intelligence showed cartoons of children playing soccer and reading, when it was asked to design images of an “Israeli boy.”

After searching “Israeli Army,” the feature showed drawings of soldiers smiling and praying, without using weapons.

The British newspaper revealed that it had verified the aforementioned results, highlighting that employees of Meta, the owner of the WhatsApp application, reported the matter and discussed it internally.

Angry comments

One commentator on the X social media platform said: “Meta algorithms contribute greatly to spreading a certain stereotype.”

Another stated: “What is this contradiction? Why is there this distinction between Israelis and Palestinians in Zuckerberg’s applications?”, referring to Mark Zuckerberg, CEO of Meta.

Another user stated: “This is how WhatsApp brainwashes you.”

This discovery comes at a time when Meta was criticized by many Facebook and Instagram users who publish content supportive of the Palestinians.

Users say that their posts supporting Palestine disappear or witness “weak interaction,” indicating that “Meta” implements its policies in a manner biased toward Israel.

#search #result #Palestine #WhatsApp #arouses #anger. #reason