NY.- Franz Broseph looked like any other Diplomacy player for Claes de Graaff. The handle was a joke: Austrian Emperor Franz Joseph I was reborn as a brother online, but that was the kind of humor people who play Diplomacy tend to enjoy. The game is a classic, beloved by the likes of John F. Kennedy and Henry Kissinger, combining military strategy with political intrigue while recreating World War I: players negotiate with allies, enemies, and everyone in between while planning how they will behave. their armies across 20th century Europe.

When Franz Broseph joined a 20-player online tournament in late August, he courted other players, lied to them, and eventually betrayed them. He finished in first place.

Graaff, a chemist living in the Netherlands, finished fifth. He spent almost 10 years playing Diplomacy, both online and in live tournaments around the world. He didn’t realize it until several weeks later it was revealed that he had lost to a machine. Franz Broseph was a robot.

“I was flabbergasted,” said de Graaff, 36. “He seemed so genuine, so down to earth. He could read my texts and talk to me and make plans that would be mutually beneficial, that would allow us both to get ahead. He also lied to me and betrayed me, as the best players usually do ”.

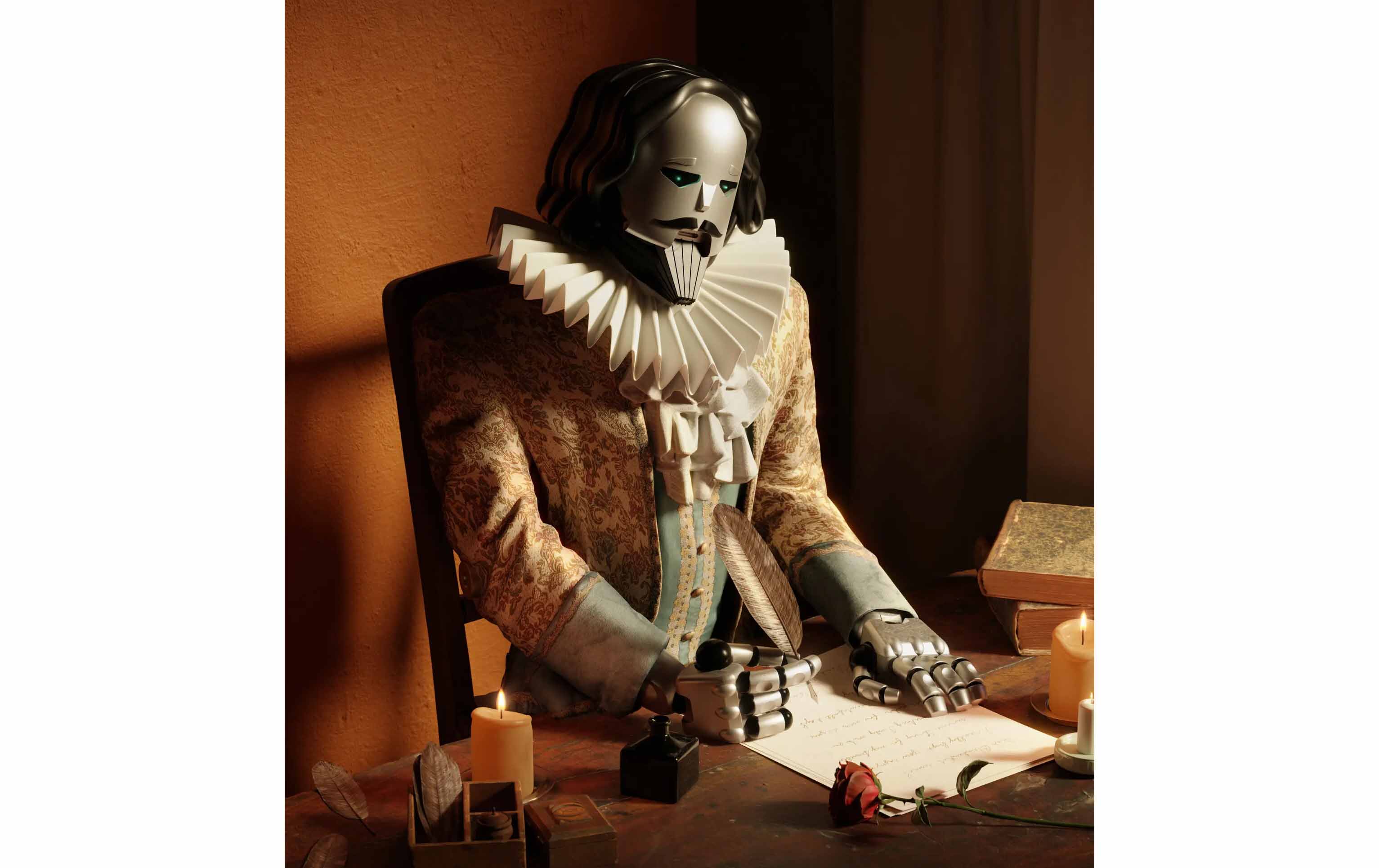

Built by a team of AI researchers from tech giant Meta, the Massachusetts Institute of Technology and other leading universities, Franz Broseph is among the new wave of online chatbots that are rapidly moving machines into new territory.

When you chat with these bots, it can feel like you are chatting with someone else. It can feel, in other words, as if the machines have passed a test that was supposed to test their intelligence.

For more than 70 years, computer scientists have struggled to develop technology that could pass the Turing test: the technological tipping point at which humans are no longer sure whether they are chatting with a machine or a person. The test is named after Alan Turing, the famous British mathematician, philosopher, and wartime code breaker who proposed the test in 1950. He believed it could show the world when machines had finally achieved true intelligence.

The Turing test is a subjective measure. It depends on whether the people asking the questions feel convinced that they are talking to someone else when they are actually talking to a device.

But whoever is asking the questions, the machines will soon leave this proof in the rearview mirror.

Bots like Franz Broseph have already passed the test in particular situations, such as negotiating diplomatic moves or calling a restaurant to book a dinner. ChatGPT, a bot launched in November by OpenAI, a San Francisco lab, leaves people feeling like they’re chatting with someone else, not a bot. The lab said more than a million people had used it. Because ChatGPT can write almost anything, including term papers, universities fear it will make a mockery of class work. When some people talk to these bots, they even describe them as sentient or sentient, believing that the machines have somehow developed an awareness of the world around them.

Privately, OpenAI has created a system, GPT-4, that is even more powerful than ChatGPT. You can even generate images in addition to words.

And yet these bots are not responsive. They are unaware. They are not intelligent, at least not in the way that humans are intelligent. Even the people who build the technology recognize this point.

These bots are pretty good at certain types of conversation, but they can’t respond to the unexpected as well as most humans. Sometimes they talk nonsense and cannot correct their own mistakes. Although they can match or even exceed human performance in some respects, they cannot in others. Like similar systems before them, they tend to supplement skilled workers rather than replace them.

Part of the problem is that when a bot imitates a conversation, it can appear more intelligent than it really is. When we see a glimpse of human behavior in a pet or machine, we tend to assume that it behaves like us in other ways, too, even when it doesn’t. The Turing test does not consider that humans are naturally gullible, that words can so easily fool us into believing something that is not true.

As the latest technologies emerge from research labs, it is now obvious, if not before, that scientists need to rethink and reshape the way they track the progress of artificial intelligence. The Turing test is not up to par.

Time and again, Artificial Intelligence technologies have passed supposedly insurmountable tests, such as mastery of chess (1997), “Jeopardy” (2011), Go (2016) and Poker (2019). Now they’re outgrowing another, and again this doesn’t necessarily mean what we thought.

We, the public, need a new framework to understand what artificial intelligences can do, what they cannot do, what they will do in the future, and how they will change our lives, for better or worse.

#smart #robots